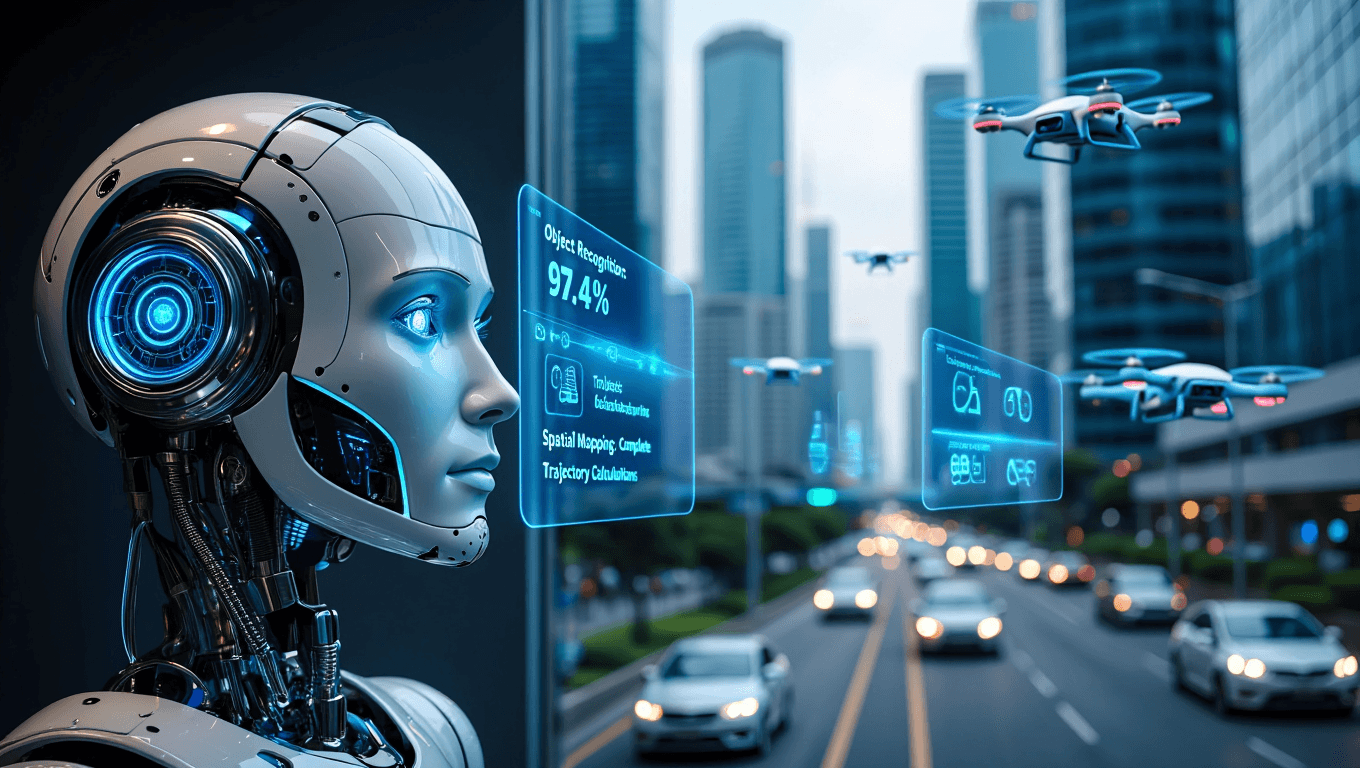

Real-time perception + visual motion prediction for autonomous systems

A Vision-AI framework that boosts situational awareness in low-visibility conditions and predicts the motion of fast-moving objects – directly on edge compute

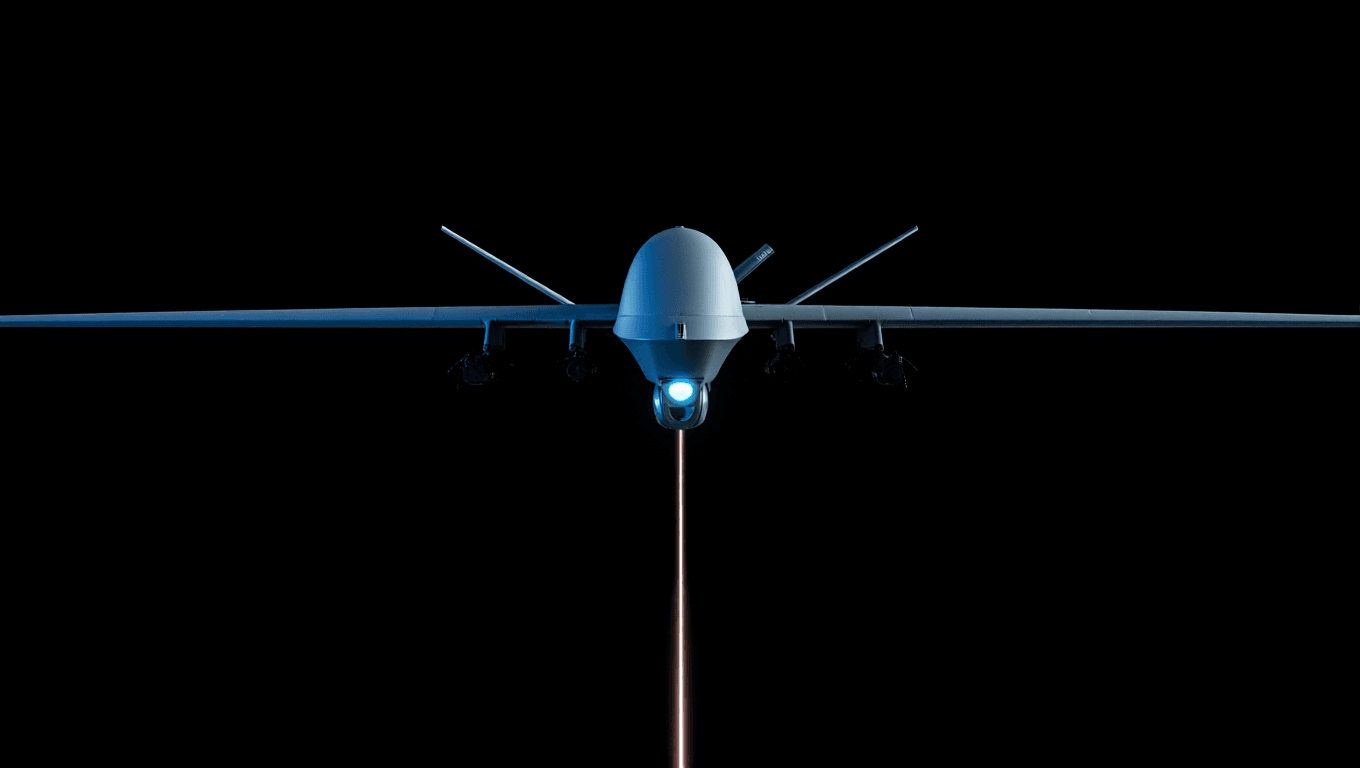

Last-mile Visual Acquisition for Unmanned Platforms

Ora builds AI agents and structured vision workflows that bring computer vision into the real world – supporting border & critical infrastructure protection, ISR / reconnaissance, and search & disaster response. Our edge-ready Vision-AI framework converts imperfect video into stable detections, tracking, and decision cues for autonomy and human-in-the-loop control in degraded visibility, degraded links, and contested RF.

Real-time perception

On-device detection, tracking, and scene understanding tuned for compressed, noisy, or unstable feeds. Designed to keep object acquisition consistent under blur, occlusion, and weather/lighting degradation—within tight power/thermal limits.

Visual motion prediction

Short-horizon motion forecasting from live video to maintain continuity when latency spikes or frames drop. Produces trajectory-aware cues for fast-changing environments and dynamic objects.

Decision systems

A decision-support layer that converts perception outputs into actionable recommendations: prioritization, confidence scoring, and event-driven triggers. Built for human-in-the-loop workflows with clear, auditable signals rather than opaque “black-box” commands.

Multi-agent coordination

Structured workflows and agent orchestration that align detections, tracks, and predictions across sensors and platforms (optical/thermal, analog/digital). Enables coordinated autonomy and consistent operator experience in multi-asset scenarios.

Deployable Vision-AI building blocks for real-world autonomy

We design and implement agent-driven vision workflows that move from “model demo” to field-ready capability: on-device perception, motion-aware tracking, and decision cues that stay stable under degraded sensing and degraded links. Our approach is modular by design—so teams can integrate Ora into existing autonomy stacks, payloads, and operator tools without a full platform rebuild.

Use-Case 1

Industrial robotics

Perception that survives real production constraints.

Support robotics in logistics, manufacturing, and inspection where video is compressed, lighting varies, and compute budgets are fixed. Ora focuses on stable detection + tracking and operator-ready cues that reduce false alarms and keep automation reliable in messy environments.

Use-Case 2

Dual-use platforms

Edge vision for unmanned and remote operations. Build for UGV/UAV and mobile inspection platforms where connectivity isn’t guaranteed. Ora provides an edge-ready perception + prediction layer that remains usable under latency, low bitrate feeds, and sensor noise, enabling consistent human-in-the-loop control and higher levels of assisted autonomy.

Use-Case 3

Research collaboration

Field validation + data-to-deployment loops. Collaborate on joint testing and real-world trials: dataset design, robustness evaluation in degraded conditions, and deployment constraints (power/thermal/latency). We’re interested in partners who can help stress-test and benchmark approaches across sensors and environments.

Use-Case 4

Investment & partners

Strategic partnership for scaling autonomy stacks. We welcome institutional investors and strategic partners who want exposure to dual-use autonomy infrastructure – especially those positioned to accelerate integration, distribution, and validation across platforms and markets.

Key benefits of edge Vision AI for operational autonomy

Discover how AI automation enhances efficiency, reduces costs, and drives business growth with smarter, faster processes

Reduce operator load. Increase mission throughput. Scale faster.

Book a call to discuss pilots, integration pathways, and collaboration formats.